Getting to Know the 2012 Ed and Judy Jelks Travel Award Winners

As a professional organization, the Society for Historical Archaeology promotes the participation of student members…

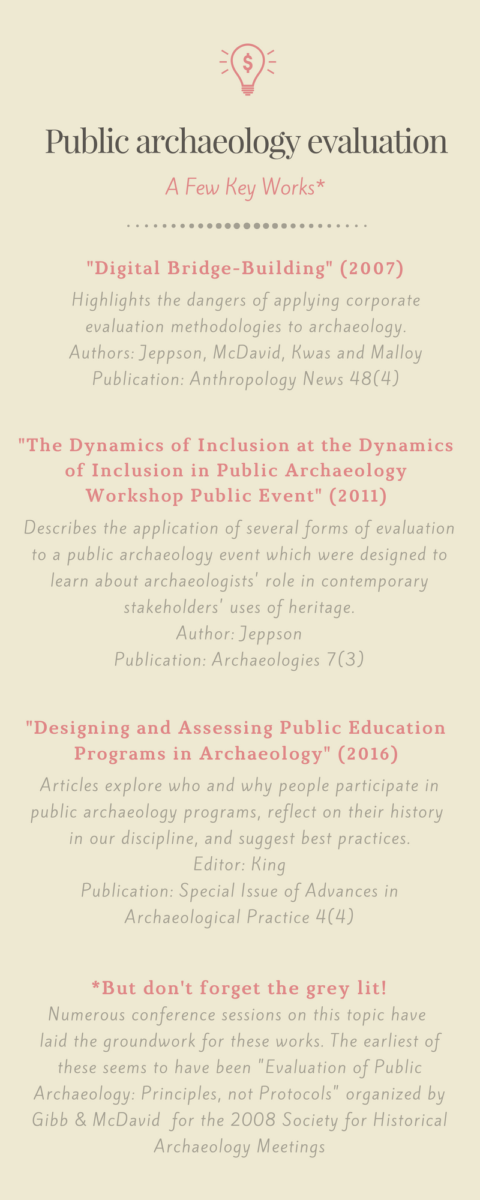

This has become clear over the past ten years as conference sessions on the topic have become common. The PEIC-sponsored session at this year’s SHA meeting, “Motivations and Community in Public Archaeology Evaluation” (organized by Kate Ellenberger & Kevin Gidusko) is the latest in a long line of conference sessions and activities devoted to public archaeology evaluation starting in 2008. In this session alone there were authors from academia, CRM, non-profits, and the federal government setting and evaluating their progress toward outreach goals. Though the published literature on the topic may be thin, there is much support for pursuing evidence-based public archaeology (see the infographic below).

Evaluation can be done for little cost, in a short time, for a narrow use, or they can be scaled up for larger, more extensive applications. At any size, implementing evaluation or self-assessment seems to have a positive impact on public archaeology projects.

Evaluation can be done by people at all skill levels with a little research, consistency, and persistence. Although we advocate the use of evidence-based evaluation practices where appropriate (such as those developed by education professionals and program analysts), we also acknowledge that archaeology work is so variable that existing practices may not fit everyone’s needs. There are numerous tools developed within and outside archaeology to understand what people are learning at your program, who is showing up, and what impact your work might be having on your target audiences (see infographic for a few leads).

Even in this year’s SHA session, colleagues from all sectors – CRM, academia, non-profits, federal employees, and independent researchers – have been able to conduct evaluations. Each of them had identified strengths, weaknesses, and future directions in some way by strategically evaluating how their programs went. They employed surveys, statistical analysis, and participant observation to assess whether public archaeology programs had met their goals. Only two of them had specialty training in statistics or evaluation. There should be no doubt that any archaeologist can evaluate, and not having a specialist to do so is not an excuse.

Evidence-based public archaeology is good for archaeologists and the publics they are interacting with. Even though public engagement projects have a wide variety of goals, clearly articulating and following up on those goals is good research practice. Being able to demonstrate that we have made some progress in public perceptions of archaeology is important if we expect to be able to continue outreach work. It helps to see patterns of successes and failures across within our work so we may better adjust our future engagements.

This post written by: Kate Ellenberger